You want fast wins from ux testing. Great choice. ux testing helps you see what your users do, not just what they say. When you avoid common traps, ux testing gets clear, quick results. You fix pages that block signups. You smooth flows that drag. You raise conversion rate with simple moves. we will walk through what ux testing is, why it matters, and the top mistakes to skip. We will also share easy fixes, workflows, tools, and a short case study. By the end, you will feel ready to run ux testing this week and see gains soon.

What to expect:

- A simple view of ux testing vs usability testing and research

- Ten common mistakes in ux testing, plus quick fixes

- A step-by-step plan you can copy

- Plain language tips for busy teams and founders

What is ux testing and why it matters

ux testing is a way to watch real people try to do tasks in your product. You give them simple goals. You see where they get stuck. You learn what to improve next. ux testing is different from usability testing and user research, but they are close cousins:

- ux testing focuses on task flow and outcomes in a real or close-to-real product.

- usability testing checks ease of use in specific screens or features.

- user research looks wider at needs, feelings, and context.

ux testing links to A/B testing, conversion rate optimization (CRO), and product choices. Here is how they fit:

- Use ux testing to learn why people fail or succeed.

- Use A/B testing to prove which fix works best at scale.

- Use CRO to plan and track the wins.

- Use the insights to shape your roadmap and design.

Key outcomes to track from ux testing:

- Task success: did users finish the job?

- Time on task: how long did it take?

- Errors and drops: where did they quit?

- NPS and CSAT: how did it feel?

- Revenue impact: did it lift conversion rate and average order value?

Related help you might add later: a ux audit, help from a ux design agency or, and even ux design consulting firms. You can also hire ui ux designers, explore ai for ux design, or test UX AI Tools that speed up notes and clips.

Top 10 ux testing mistakes to avoid fast

Mistake 1: Starting ux testing without a clear hypothesis

Impact: If you start ux testing with no clear idea, you get fuzzy notes and long debates. People argue about tiny stuff. Nothing ships.

Quick fix:

- Write a SMART hypothesis. Example: “If we simplify the signup form from 10 to 4 fields, task success will rise by 20%.”

- Tie each hypothesis to a business KPI like conversion rate, signup completion, or revenue per visit.

Metrics to watch:

- Conversion rate by step

- Task completion and error count

Use the focus keyword in your plan and notes so your team stays aligned: “This ux testing run will validate the signup hypothesis.”

Mistake 2: Testing the wrong users for ux testing

Impact: If you bring in the wrong users, the results mislead you. You ship changes that your real audience does not need.

Quick fix:

- Create a short screener. Ask about needs, device type, and intent.

- Recruit by segment. For example, new buyers vs repeat buyers.

- Keep going until feedback repeats. That is called qualitative saturation.

Metrics:

- Cohort-by-cohort results

- Repeat themes across sessions

If you need help, consider a ux design services partner or look at Top UX Agencies that excel at recruiting. Some teams use ux testing services to run fast, clean studies.

Mistake 3: Underpowered ux testing sample sizes

Impact: With too few people, you may chase noise. With too many, you waste time. Underpowered ux testing gives weak confidence.

Quick fix:

- Plan your sample before you start. For simple flows, 5–7 users can reveal most issues. For higher stakes, go 10–15.

- Prioritize effect size. You do not need perfect math to see a big, clear problem.

Metrics:

- Confidence in findings across users

- Repeatability of issues session to session

Keep it simple. ux testing is about clear patterns, not perfect statistics. Save strict proof for A/B tests.

Mistake 4: Leading questions in ux testing scripts

Impact: If you lead the witness, users tell you what you want to hear. That makes ux testing look good but hides the truth.

Quick fix:

- Use neutral words: “What would you do next?” not “Would you click the big green button?”

- Pilot your script with one teammate and one user. Fix any hints or bias.

Metrics:

- Quality of verbatim quotes

- Agreement between note-takers (inter-rater reliability)

Make a plain script template. Keep questions short. Let silence work. This keeps ux testing honest and useful.

Mistake 5: Low-fidelity prototypes blocking ux testing insights

Impact: If your prototype lacks real data or true states, users react to the wrong thing. ux testing then flags issues that will not appear in the live build.

Quick fix:

- Match fidelity to the goal. For flow checks, mid-fidelity is fine. For microcopy or price clarity, use real content and near-live UI.

- Add realistic data: shipping fees, taxes, stock status, and error states.

Metrics:

- Drop-off by step

- Error rates on key fields

When in doubt, test with the closest thing to live. Many teams pair ux testing with a quick ux audit to spot gaps in content and states.

Mistake 6: Ignoring mobile-first ux testing

Impact: Most users come from phones. If you skip mobile in ux testing, you miss the biggest pain. You risk cart drops and slow taps.

Quick fix:

- Set device quotas: phone first, then desktop and tablet.

- Test on real networks. A 3G or crowded Wi‑Fi feel shows true friction.

- Check thumbs, tap targets, and text size.

Metrics:

- Mobile conversion vs desktop

- Tap accuracy, zoom events, latency

Add “mobile-first” to every ux testing plan. Simple changes like larger hit areas, fewer steps, and sticky CTAs can lift wins fast.

Mistake 7: Mixing ux testing with live A/B testing signals

Impact: If you run ux testing and A/B testing at the same time on the same users, you may mix learning with validation. This causes confusion and bad rollouts.

Quick fix:

- Separate learning and proving. Use ux testing to find issues and ideas. Use A/B testing later to measure impact at scale.

- Freeze big changes during ux testing sessions.

Metrics:

- Pre vs post variance

- Guardrail metrics like bounce rate, error rate, and time on page

Think of ux testing as the lab and A/B testing as the field. You need both, but not at the same time on the same people.

Mistake 8: Skipping accessibility in ux testing

Impact: If you skip accessibility, you leave people out and risk problems. Good ux testing includes users with different needs and tools.

Quick fix:

- Add basic checks aligned with accessibility rules (WCAG).

- Include people who use screen readers, keyboard nav, or high-contrast modes.

- Keep color contrast and focus states strong.

Metrics:

- Keyboard reachability

- Contrast compliance and error-free sessions

Accessibility is part of quality. Pair ux testing with ux audit services to catch quick wins across forms, buttons, and alerts.

Mistake 9: Poor task design for ux testing sessions

Impact: Bad tasks make users act in odd ways. Then ux testing shows fake problems. The team wastes time.

Quick fix:

- Write goal-based tasks: “Find and buy a red T-shirt for under $20.”

- Add realistic limits: “Use a guest checkout.”

- Avoid step-by-step hints.

Metrics:

- Task success and time on task

- Error types and recovery paths

Good tasks tell a simple story. The user knows what they want. You watch how they try to get it. That is the heart of ux testing.

Mistake 10: Weak synthesis after ux testing

Impact: Many teams run sessions and stop. Notes sit in slides. No one owns the fix. The backlog never changes.

Quick fix:

- Do a quick affinity map. Group notes by theme.

- Rate severity and impact. Assign owners and due dates.

- Share a one-page summary with clips.

Metrics:

- Issue resolution rate

- Time-to-fix and follow-up A/B results

Make synthesis a habit. Schedule it on the calendar right after sessions. ux testing only helps when it leads to shipped change.

Practical solutions and workflows

Test planning checklist: objectives, KPIs, audience, risks

Before each round of ux testing:

- Objectives: What decision will this test inform?

- KPIs: conversion rate, task success, time on task

- Audience: segments, devices, and accessibility needs

- Risks: technical limits, time, data privacy

Add related support like a quick ux audit or help from a ux design agency. If you prefer in-house, many ux design consulting firms offer training and templates.

Recruitment playbook: channels, incentives, quotas

- Channels: email lists, in-product invites, user panels, or social groups

- Incentives: gift cards or discounts

- Quotas: device mix, new vs repeat users, and accessibility needs

- Consent: keep it simple and clear

When time is tight, consider ux testing services. They can find users fast and save your team hours.

Session setup: tools, consent, environment controls

- Tools: choose reliable ux testing tools with screen share and recording

- Consent: record with permission, state how you will use data

- Environment: stable internet, minimal noise, real devices

- Backup: have a second link and a spare moderator ready

Using ai for ux design? Try UX AI Tools that auto-tag clips or summarize notes. They do not replace people, but they make ux testing faster.

Analysis workflow: notes, clips, tags, triage

- Notes: capture quotes and errors in real time

- Clips: mark key moments while recording

- Tags: use a simple tag set like “nav,” “copy,” “error,” “mobile”

- Triage: rate each issue by severity and effort

- Plan: add top items to the sprint backlog with owners

This workflow turns raw ux testing into action.

ux testing metrics that drive decisions

Core metrics: task success, time on task, SUS, CSAT

- Task success: percent of users who finish the goal

- Time on task: faster is not always better, but huge delays signal friction

- SUS (System Usability Scale): a simple 10-question score

- CSAT: quick rating of satisfaction

Make sure these appear in every ux testing report.

Business metrics: conversion rate, AOV, retention

- Conversion rate: did more users complete the key action?

- AOV: did average order value change after fixes?

- Retention: did users return within 7 or 30 days?

ux testing should link to business outcomes. That is how you earn time and budget.

Confidence and power for moderated vs unmoderated tests

- Moderated ux testing gives rich insights with fewer users.

- Unmoderated tests scale fast but need tight tasks and checks.

- Mix both. Start moderated to learn the “why,” then use unmoderated to confirm the “what.”

Keep your approach simple. Use small, steady ux testing cycles to build confidence.

Tools and templates for ux testing

Research repository and tagging standards

Set up a simple repository to store notes, clips, and insights. Use standard tags, owners, dates, and links to tickets. This keeps ux testing learnings easy to find and share.

Script, screener, and reporting templates

- Script: tasks, neutral prompts, and wrap-up questions

- Screener: a few questions to pick the right users

- Report: one pager with goals, methods, key issues, fixes, and next steps

Templates speed up every ux testing round.

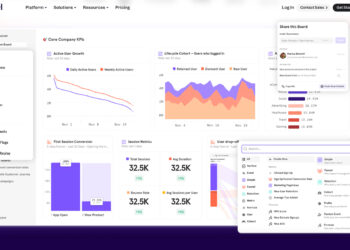

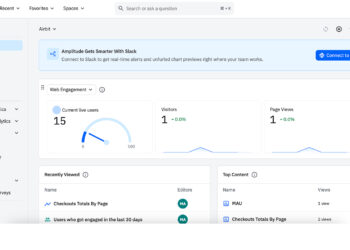

Recommended stack: remote testing, analytics, heatmaps

- Remote ux testing tools for live sessions

- Analytics for funnels and cohorts

- Heatmaps and session replays for click and scroll patterns

- ux research tools for surveys and quick polls

If you need outside help, check Top UX Agencies or a trusted ux agency. You can also hire ui ux designers for short sprints.

Case study outline: fixing a checkout with ux testing

Problem: high drop-off at payment step

A retail site had good traffic but weak sales. Most users quit at payment. The team ran ux testing to learn why.

What we saw:

- Confusing shipping fees

- Tiny error messages

- Slow mobile fields

Approach: hypothesis, sessions, A/B follow-up

Hypothesis: “If we show clear total cost early and fix form errors, task success will rise.”

Steps:

- Plan a mobile-first ux testing round with 10 users.

- Use realistic data and full price details.

- Record clips of confusion and error loops.

- Synthesize findings and rate severity.

- Ship fixes: early cost summary, bigger inputs, and clearer errors.

- Run an A/B test to measure lift.

Outcome: uplift in conversion and reduced support tickets

Results:

- Task success up 28%

- Checkout time down 35%

- Support tickets about payment down 40%

- Live A/B conversion rate up 18%

The mix of ux testing and A/B test proved the change worked. The team kept this loop for new features.

Implementation timeline for ux testing in agile sprints

Sprint -1: backlog, hypotheses, prototypes

- Pick the top user pain from analytics and an ux audit

- Write one or two testable hypotheses

- Build a realistic prototype or use a near-live build

Sprint 0: recruit, pilot, run sessions

- Recruit by segment and device

- Pilot the script with one user

- Run 5–10 sessions, record and tag clips

- Start a simple repository for findings

Sprint 1+: synthesize, prioritize, ship fixes, validate

- Affinity map the notes

- Pick top 3 fixes with highest impact and lowest effort

- Ship improvements

- Validate with A/B testing or follow-up ux testing

This rhythm keeps ux testing lean and steady.

Common objections to ux testing and quick rebuttals

- “We already have analytics.” Analytics shows the “what.” ux testing shows the “why.” You need both.

- “No time.” One-hour planning, one-hour sessions, one-hour synthesis can fit in a day. Try hallway studies for even faster ux testing.

- “Too expensive.” A small round of ux testing can prevent a costly launch mistake. The ROI is strong when tied to conversion rate and churn.

- “We know our users.” Great. Verify with fresh eyes. Markets change. ux testing keeps you honest.

If you want help, look into ux design services or partner with a trusted ux agency. Many offer ux audit services and on-demand ux testing services to speed things up.

ux testing checklist

Use this quick list before you start your next ux testing round:

- Objectives defined and prioritized

- Right users recruited and consented

- Bias-free tasks and scripts prepared

- Realistic prototypes and data ready

- Metrics and success criteria set

- Synthesis plan and ownership assigned

Keep this checklist in your research repository. It makes every ux testing run smoother.

FAQs about ux testing

How often should teams run ux testing during releases?

Run ux testing in small cycles. Aim for once per sprint or at each big feature milestone. Even a few sessions can catch major issues before launch.

What’s the difference between ux testing and A/B testing?

ux testing helps you learn why users get stuck or succeed. A/B testing proves which change works best at scale. Use ux testing first to find ideas, then A/B to measure impact.

How many participants are enough for ux testing?

For flow problems, 5–7 users can reveal most issues. For higher risk features, go 10–15. Add more if feedback is mixed or you have many segments or devices.

Should ux testing be moderated or unmoderated?

Both work. Moderated ux testing gives rich detail and follow-up questions. Unmoderated scales faster and costs less. Many teams start moderated and then confirm with unmoderated.

Which metrics matter most for ux testing success?

Start with task success, time on task, and errors. Tie them to business metrics like conversion rate, AOV, and retention. This link shows the real value of ux testing.

Conclusion and next steps

You now have a clear path to better results with ux testing. You learned what ux testing is, how it links to CRO and product choices, and the ten big mistakes to avoid. You saw easy fixes for each mistake, a simple workflow, and a short case study with real wins. You also have a checklist and FAQs to guide your next steps.

What to do now:

- Pick one flow that matters this week.

- Write one SMART hypothesis.

- Recruit 5–7 users with a short screener.

- Run a clean round of ux testing on real devices.

- Synthesize, ship one fix, and validate with an A/B test.

If you need extra hands, explore a ux audit, compare Top UX Agencies, or talk to a trusted ux design agency. You can also hire ui ux designers for a sprint, try AI for UX design, and test new UX AI Tools and ux research tools to move faster. Ready to turn insights into growth? Start your first ux testing session today, and watch your conversion rate climb.